The ‘1 Nojor’ media platform is now live in beta, inviting users to explore and provide feedback as we continue to refine the experience.

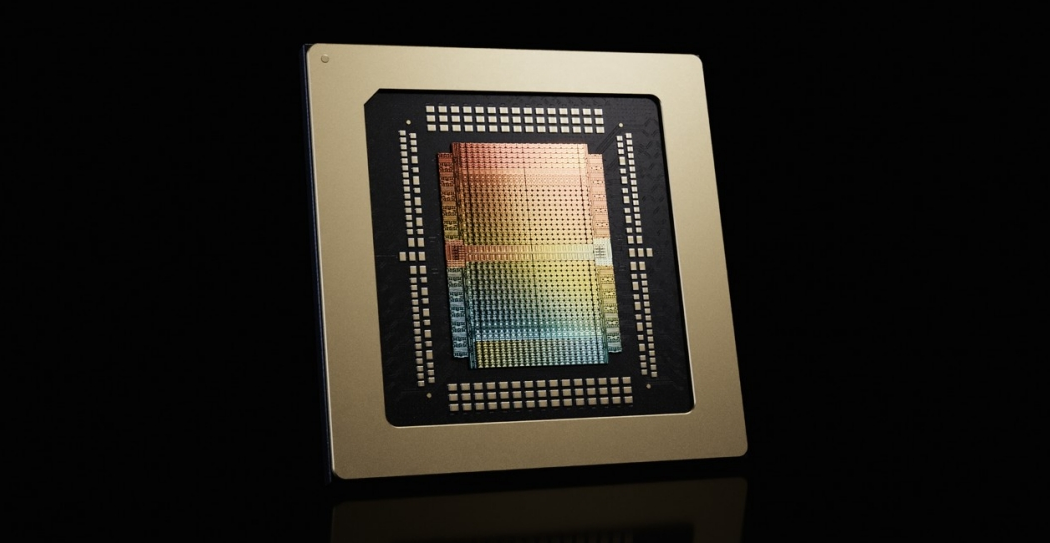

Nvidia Corp. launched the Groq 3 language processing unit (LPU) at its GTC 2026 developer conference in San Jose, introducing a dedicated inference chip designed for multi-agent AI workloads. The chip follows Nvidia’s $20 billion licensing deal with Groq Inc. in December, which included hiring Groq’s founder Jonathan Ross and President Sunny Madra. Groq 3 focuses on AI inference rather than training, offering faster memory performance to support low-latency and large-context agentic systems that automate human tasks.

The Groq 3 LPU is deployed in Groq 3 LPX server racks containing 256 LPUs, 128 gigabytes of solid-state random access memory, and 40 petabytes per second of bandwidth. It is engineered to work alongside Nvidia’s new Vera Rubin NVL72 rack, which integrates Rubin GPUs and Vera CPUs to handle trillion-parameter models and million-token contexts. Nvidia said the combined systems deliver 35 times higher throughput per megawatt and 10 times greater revenue potential.

The Groq 3 LPX and Vera Rubin NVL72 are part of five new server racks unveiled by Nvidia, including the Bluefield-4 STX storage and Spectrum-6 SPX networking systems, aimed at expanding Nvidia’s data center presence amid surging demand for AI compute power.

The ‘1 Nojor’ media platform is now live in beta, inviting users to explore and provide feedback as we continue to refine the experience.